AI, India, and the question from my NCERT textbook

I used to wonder, when I was eleven or twelve, why the front of every NCERT textbook had a paragraph that seemed to be written for a prime minister. It was called Gandhiji ka Mool Mantra in Hindi, and Gandhiji's Talisman in English. As far as I could tell, it was a moral test for somebody about to make a big decision. The kind of test you might apply before signing a treaty or passing a budget. I had no big decisions to make. I was eleven. I was being given this paragraph anyway, on the second page of every textbook I owned, in subject after subject, year after year.

I read it because I was the kind of kid who read it. The teacher would be teaching at the front of the room and I would be somewhere else, often on the front matter pages of whichever book was open in front of me. Distracted. The Taare Zameen Par kid before that film existed. The actual lesson could wait. Whoever wrote the front matter, I was their audience. That apparently included Gandhiji.

It came back to me a few days ago. I have been spending most of my time on AI for months. Most days I think it is the most important technology I have lived through. Some of what I am seeing I think I understand. Some of it I keep returning to without finding answers.

The part I keep coming back to has nothing to do with engineers losing their jobs at trillion dollar companies. It has to do with the people I have spent a fair part of my career trying to design for, and who are almost never in the room when the conversation about AI is being held. The bottom of the pyramid. The next billion. The invisible service layer. The grey collar and blue collar workers whose lives most of urban India encounters every day and rarely thinks about.

I have spent time, across two of my jobs, with people from this world. One job was about building a product for them. The other was about making the television they watched. Both jobs eventually came down to the same thing. You cannot design or write for a person you have not sat across from. So I sat across from a lot of them, in small towns and villages, in their kitchens and in their living rooms, drinking their tea and asking them questions they had no real reason to answer.

There is a house I went to in 2018 that comes back to me when I think about this. It was in a village in rural Nasik. The woman who lived there was a homemaker, in her late thirties or early forties. I think her name was Sunita Tai. (Tai is what you call an older woman in Marathi. Like Didi, but local.) Her husband was a day labourer. She sold tomatoes on the roadside outside the village. They had a television set in their one room house, a small CRT, second hand, that they had bought after saving for several months.

I was there to understand her life and her content choices. She answered patiently. She told me about her days. She told me which serials she watched and when she watched them. She was generous with her time, which she did not have a lot of.

And then, somewhere in the conversation, without any prompting, she said the sentence that I remember every time I read about artificial intelligence.

"Our life is done and it is what it is. Everything I am doing is for my children, to give them a better future."

She did not say it dramatically. She said it the way one notes the weather. It was a fact about her life. The work she was doing was not for her. It was for the boy who was eleven or twelve at the time, who was sitting in the next room, and who was going to be the first person in three generations of his family to maybe finish school and maybe get a salaried job and maybe move out of the room he was sitting in.

He would be seventeen or eighteen now. In a year or two he will start looking for work.

And one of the things I want from AI is to wonder, honestly, how it is going to be useful for him.

This is the moment that the textbook page came back. I dug it up and read it again, properly.

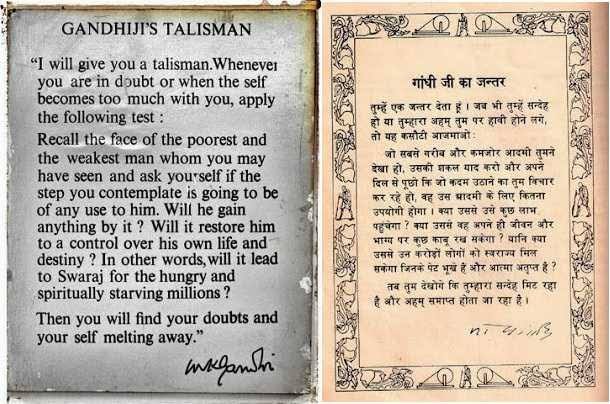

I will give you a talisman. Whenever you are in doubt, or when the self becomes too much with you, apply the following test. Recall the face of the poorest and the weakest man whom you may have seen, and ask yourself, if the step you contemplate is going to be of any use to him. Will he gain anything by it? Will it restore him to a control over his own life and destiny? In other words, will it lead to swaraj for the hungry and spiritually starving millions? Then you will find your doubts and your self melt away.

Gandhiji wrote this in August 1947 or early 1948. The face he asks me to recall, today, is Sunita Tai's. The question he asks me to ask, today, is whether the thing I am thinking about will give her son control over his own life.

I do not have a clean answer. By default, the answer is no.

The products that have made me so curious about AI live on devices her family does not own, in languages her son does not read fluently, behind subscription paywalls priced for someone with a credit card and a monthly disposable income larger than her household's annual one. His school, if he is still in school, is not factoring this in. His teachers, if they have heard of these tools, are not trained to use them. The path from a tool that costs twenty dollars a month in San Francisco to the inside of his classroom in rural Maharashtra is a path that is currently not being walked.

There are pieces of AI that already help people like him without him knowing. Better targeting on a government welfare scheme. A crop disease alert his uncle's WhatsApp group might receive. An AI-assisted screening at the primary health centre that catches anaemia in a child earlier than a human nurse alone would have. These are real and they are not nothing. But they are also not what is being talked about when an American CEO says that AI will give everyone a personal superintelligence.

I have been reading what the CEOs of the AI labs have been saying. Mark Zuckerberg is talking about everyone getting their own personal superintelligence. Dario Amodei is saying that the short term economic impact of AI is being overestimated and the long term impact is being underestimated. Sam Altman is talking about abundance, about a coming surplus that we will figure out how to distribute.

None of them are wrong about the world they live in. But there is a hidden subject in all three of these claims. Whose superintelligence? Whose long term? Whose abundance? The everyone in the everyone is not Sunita Tai's son. The world they describe is a world of high-bandwidth connectivity, English fluency, cognitive professional work, credit cards, and a state with the capacity to redistribute. The world he lives in does not look like that, and it is the world that two thirds of humanity actually lives in.

The textbook page in front of me has been asking, for seventy-eight years, whose face the planner is supposed to recall. I do not think the people answering for AI today have been asked the question.

And the early data, including data from Anthropic itself, suggests Gandhiji's question is not idle.

Anthropic's labour market researchers found that, between late 2024 and early 2026, hiring of workers between the ages of twenty-two and twenty-five fell by fourteen percent in occupations most exposed to AI. Unemployment in those occupations did not rise. The work was simply not being given to new people. The senior worker kept her job. The junior worker did not get hired.

In the same period, the top twenty countries on earth went from accounting for forty-five percent of all per-capita Claude usage to forty-eight percent. The world is diverging, not converging. The story that AI will democratize access is, so far, losing in the data. The places that already had the advantage are pulling further ahead.

This matters for India in a specific way that an American reading this might not see. India did not build a manufacturing economy. We tried, and we mostly did not succeed. What we built instead, after 1991, was a services economy. The largest formal sector employer of our young graduates is the IT services industry. TCS. Infosys. Wipro. HCL. The Cognizants and Capgeminis and Accentures of the world. Together they employ over five million people, and in the boom years they hired hundreds of thousands of fresh graduates each year. They hired them straight out of engineering colleges, most of them tier two and tier three, most of them first generation graduates, most of them from households exactly like Sunita Tai's.

That is the ladder. It is the actual ladder. The story we tell ourselves about Indian middle class formation, the one in which the maid's son goes to engineering college and ends up at Infosys and ends up middle class, is a story about that pipeline. It is the story I have been silently assuming, every time I have imagined Sunita Tai's son finding a job that would do for his life what her tomato cart could not.

And that is the pipeline the Anthropic data shows breaking. Not collapsing. Breaking. The senior engineer at TCS still has her job. The eighteen year old looking to get hired into TCS, in 2026, is competing for a smaller number of seats than she would have been in 2020. Her seat is being absorbed by a model that costs her future employer thirty dollars a month.

So when Sunita Tai says everything she does is for her son, the question of whether AI helps her becomes a literal one. AI helps her if it does not break the ladder her son was supposed to climb. Right now the ladder is breaking, and nothing is being built underneath it.

Our state is not unaware of all this. The Indian government, through the IndiaAI Mission, has committed about ten thousand crore rupees to artificial intelligence over five years. The money is going to compute. To datasets. To frontier model development. To talent. To an India AI Safety Institute. These are real programs. They are not theatre.

But the question they are answering is not the question the textbook page asks. The question they are answering is how India participates in the global AI economy. Sovereign capacity. Indian foundation models. The ability to compete with American and Chinese labs. These are legitimate goals. They are not the talisman question.

What is missing is a line in the budget for the question of how AI reaches Sunita Tai's son. There is no allocation, of any meaningful size, for the deployment of vernacular AI to the bottom fifty percent of our country. There is no policy framework that asks the talisman question of every AI program before approving it. There is industrial policy. There is no antyodaya policy.

A few Indians are thinking about this. Reuben Abraham, Pratap Bhanu Mehta, Devesh Kapur, Raghuram Rajan have all touched it in their work. The Economic and Political Weekly publishes the most rigorous critical writing. But the public conversation is thin. The academic work has not translated into the register that changes policy or shapes the conversation at a dinner table. We are early to a question that nobody has fully decided to ask.

And even when we ask it, I do not think the answer is going to come from talking. I think it is going to come from building.

The problem is not that the right essays have not been written. The problem is that the products themselves do not reach Sunita Tai's son. They are priced for him to never use them. They are designed for him to never use them. They run on devices his family does not own, in interface conventions he was never taught, behind authentication walls he does not trust.

If we want him to benefit from AI, somebody has to build that layer. Indian startups, yes. But also the trillion dollar companies that today own the foundation models. They have the resources. They have the capability. They do not currently have the incentive.

Some years ago Facebook tried to bring the internet to India through a program called Free Basics. The program failed for some good reasons. But the question it was trying to answer was the right one. How do you reach the people who are technically online and functionally not? Should the AI labs have a programme like that today? I think they should. And I think the question of what it looks like is the most interesting product question of the next decade.

It also has to be priced for him. Most international platforms have figured out, eventually, that India is different. Netflix learned that the price point for an Indian household was not nine dollars and ninety nine cents but two dollars, and that the content needed to be local, and that mobile only plans were not a downgrade but the actual product. The companies that succeeded in India treated India as a design philosophy, not as a localization problem. The companies that failed treated India as the United States with a smaller wallet.

I would love to know what the people running the foundation labs actually think about this. What do Anthropic and OpenAI and Google DeepMind, as firms, see when they look at a household earning fifteen thousand rupees a month, in a village outside Nasik, with a son about to enter the job market? Is there a strategy? Is there a product roadmap? Or is the bottom of the pyramid, for now, simply not on the map?

I know there are rebuttals to all this. India Stack will solve it the way Aadhaar and UPI reached the bottom of the pyramid; true, but India Stack reached the bottom because somebody made a deliberate, capital-intensive bet, and there is no equivalent bet on AI yet. It is too early to tell, the way the internet took twenty years and mobile phones took fifteen to redistribute their benefits; true, and the choices being made today are going to shape the next decade either way. Sunita Tai's son could leapfrog the broken ladder and become a software founder from his village; true for the few, but entrepreneurship has low base rates everywhere, and the mass ladder is what matters for the mass case. AI in agriculture and healthcare and welfare delivery already serves the poor in ways I am underplaying; true, but the dominant aspiration in Sunita Tai's life runs through her son's mobility, and his mobility runs through institutions that AI is currently disrupting more than serving.

In a year or two, Sunita Tai's son is going to start looking for work. He may not get the job his mother was working towards. He may get something else. He may get nothing for a while. He may eventually be helped by a tool that does not exist today and that is built, at some point, by a company that decided he was worth building for.

I do not know which of these will happen. Nobody does.

What I do know is that the textbook page from my childhood is still asking its question, and the question is, in 2026, sharper than it has been in a long time. Recall the face. Will the step you are contemplating give that face control over his own life and destiny?

The CEOs are contemplating their step. The Indian state is contemplating its step. And in a one room house outside a village near Nasik, a woman I met eight years ago is doing what she has been doing every day since, which is selling tomatoes for the future of a boy who is now almost a man.

The question is not whether her work will be enough. It is whether ours will be.